← Back to Automation Failure Modes

Why Automations Break: Six Degrees of Systemic Failure

In the modern enterprise, automation is sold as the ultimate mitigator of friction. Operations teams deploy workflows to "save time" and "scale effort." However, without a structural architecture, these systems often introduce a new, more dangerous type of friction: Systemic Fragility.

As you add more connections between disparate APIs, you are not just building efficiency; you are building a house of cards where every card is controlled by a third party you do not own. This insight diagnoses why business automations break and define the principles required to build for resilience.

Use this diagnostic lens to identify if your current workflows are building leverage or technical debt.

What People Think This Solves

Executives and operators typically approach automation with a "Tool-First" mindset. The belief is that by implementing a platform like Zapier, Make, or Power Automate, the following problems will vanish:

- Employee Error: "Machines don't make mistakes; people do."

- Latency: "Data will move instantly, reducing speed-to-lead."

- Cost: "Automation is cheaper than a human virtual assistant."

- Complexity: "The tool will handle the logic so we don't have to."

This is the "Set and Forget" Fallacy. It treats automation as a kitchen appliance rather than a distributed software system. While tools reduce manual friction, they replace it with architectural complexity that requires a completely different set of management skills.

What Actually Breaks: The Six Degrees of Failure

In a professional diagnostic audit, we find that automations rarely fail due to "software bugs." They fail because of Structural Friction. Here are the six most common ways automation systems collapse at scale:

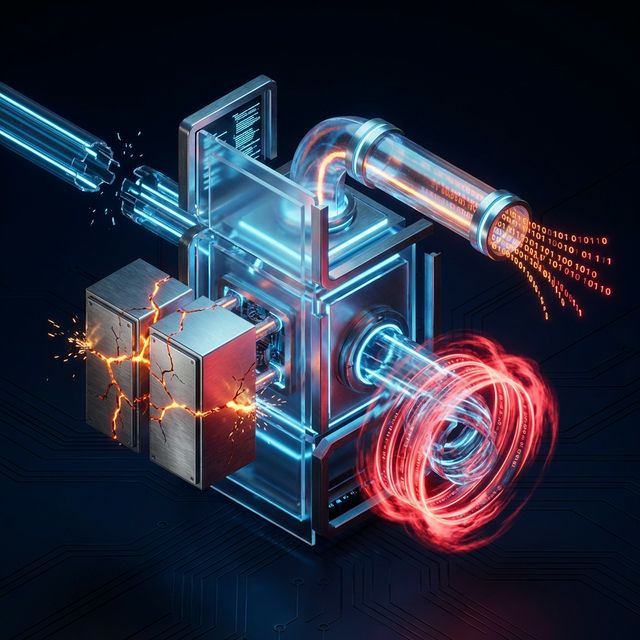

1. Tight Coupling (The Domino Effect)

Tight coupling occur when App A depends directly on the specific output format of App B. This is a foundational software engineering risk, as explained in Martin Fowler's guide to coupling. If you change a custom field in your CRM (App B), the automation in App A breaks instantly. In a tightly coupled system, you cannot change one part of your business without a cascade of failures across the stack. This turns your "flexible" automation into a "distributed monolith" that freezes your business logic in time, a concept we explore further in system design patterns.

2. Silent API Drift

API providers (Salesforce, Stripe, HubSpot) update their systems constantly. Often, these are "minor" updates that don't trigger an error in your automation tool, but they change how data is mapped. A phone number format changes, or a hidden field becomes required. The automation still "completes," but the data arriving at the destination is corrupted. This is Silent Failure: the most expensive kind of break because you don't know it happened until your reports are ruined three months later.

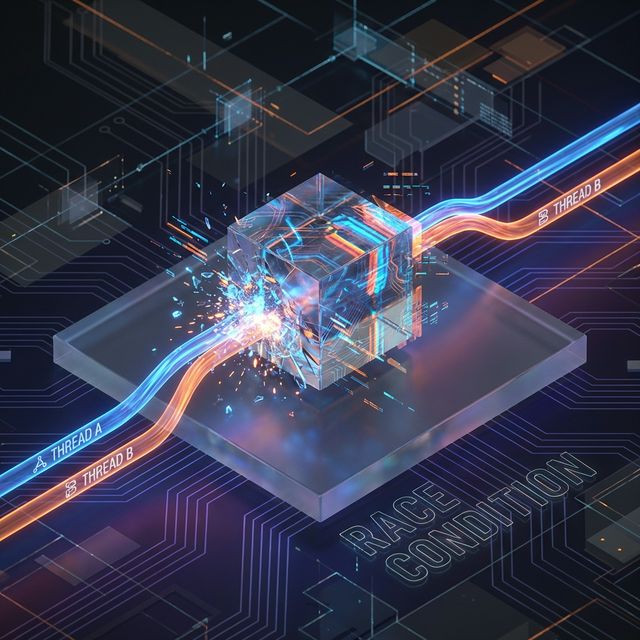

3. State Mismatch and Race Conditions

Automation tools are often stateless—they don't "remember" what happened a second ago. A race condition occur when two automations trigger for the same event simultaneously. Automation #1 updates a record, but Automation #2 is still looking at the "old" version of that record. Automation #2 then over-writes the update from Automation #1. You end up with "Zombie Data" that doesn't reflect the current reality of the business.

4. Semantic Hallucinations (The AI Risk)

In the era of LLMs, we are seeing a rise in Semantic Failures. An AI agent is tasked with "summarizing a lead's intent." It generates a beautifully written summary that happens to be factually incorrect or omits a crucial detail. Since the summary is plausible, no one notices the error until a sales rep mentions a non-existent pain point to a prospect. This is "Intelligence without Constraint."

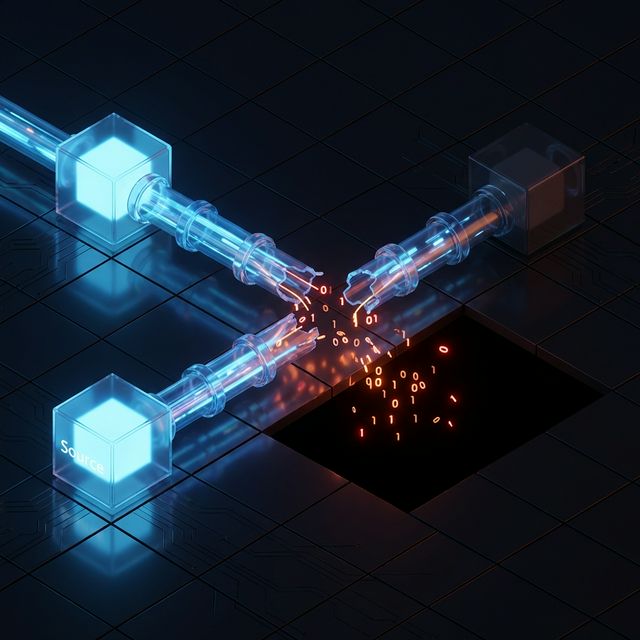

5. Dependency Circularity

This is a classic logic loop. Automation A updates a record in the CRM. The CRM update triggers Automation B. Automation B, as part of its logic, updates a field that triggers Automation A. The system enters an infinite loop, burning through your monthly task quota in minutes and potentially getting your IP banned by the API provider for "Abuse."

6. The Fallacy of Transparency

Most automations are "Black Boxes." If a lead doesn't arrive in the CRM, no one knows where it died. Was it the webhook? The filter logic? The API credentials? Without Logging and Observability, you spend hours "trying things" instead of diagnosing the root cause. Transparency is the difference between a 5-minute fix and a 3-day outage, and it's why failing to account for the hidden cost of observability is so dangerous.

Why This Failure Is Expensive

The cost of a broken automation is not the price of the software license; it is the Loss of Systemic Trust.

- Lead Loss: Every minute an automation is down, potential revenue evaporates.

- Data Pollution: The cost of hiring a data analyst to clean up 10,000 corrupted CRM records is often 10x the cost of the original build.

- Operational Paralysis: When the system breaks too often, employees stop trusting the tech and revert to manual spreadsheets. You are now paying for expensive software that creates *more* work.

- Reputational Damage: Automated emails sent to the wrong person with the wrong data destroy brand authority instantly.

System Design Principles: The Rules of Resilience

To build automations that survive the "Real World," you must adhere to three core engineering principles:

1. Decoupling via Message Queues

Never let App A talk directly to App B if it's a mission-critical transaction. Use a buffer (like an Airtable Queue, a Database table, or a Message Queue like SQS). This allows you to "pause" the system if one app is down without losing the data. You don't lose the lead; you just delay the processing.

2. Idempotency

Design every automation as if it were going to be run ten times for the same event. Before creating a record, always search for a unique identifier (like an Email or Order ID). If it exists, update it; do not create a duplicate. An idempotent system is "self-healing," and is a core part of any professional automation reliability checklist. This follows the principles of distributed systems reliability pioneered by companies like Google.

3. Observability as a First-Class Citizen

Every automation must have an "Error Path." If a step fails, the system should automatically send a Slack notification or log the failure to a central dashboard with the Raw Payload. If you can't see why it failed, you haven't finished building it. For more on this, see the difference between observability and monitoring.

Where This Pattern Fits (and Where It Doesn’t)

Apply these principles when:

- The transaction involves revenue or customer data.

- Failure would require manual data cleanup.

- The system is expected to scale beyond 100 tasks per month.

Ignore these principles when:

- The task is a personal productivity hack.

- The data is ephemeral (e.g., "Post a GIF to Slack when I finish a task").

- The cost of the "Resilient Build" is 100x the value of the task.

How This Appears in Client Systems

When we perform a Systems Diagnostic, we usually hear these symptoms before we even look at the code:

- "I'm terrified to touch the CRM settings because I don't know what will break."

- "We have 300 Zaps, but only one person knows how they work, and they're leaving."

- "Sales says the lead source data is 'usually wrong' so they ignore it."

These are not complaints about software. They are symptoms of Architectural Collapse. The goal of this library is to help you recognize these patterns and move toward a more durable, authority-based system.

System fragility is not inevitable. It is the result of choosing speed over structure. Maturity is the moment an operator realizes that "working" is not the same thing as "reliable." For a deeper dive, review our Automation Failure Modes and Automation Reliability Checklist.

Operators diagnosing this pattern often find the structural root cause in → Explore Automation Failure Modes